Here is how I learned that a dark factory is not a thought experiment. It is a weekend project that accidentally becomes production infrastructure.

Groupon had a careers site. It was the kind of site you have seen a thousand times: blue gradients, stock photos of people shaking hands, centered text saying We are passionate about excellence. It said nothing true. It attracted no one worth hiring. The old site lived at grouponcareers.com and it was embarrassing every time a candidate opened it.

I decided to fix it. Not in a quarter. Not with a roadmap. Over a single weekend. The goal was not to ship a perfect site. The goal was to ship a site that told the truth about working at Groupon during an AI transformation, and to build it using the same agentic patterns we were already preaching internally.

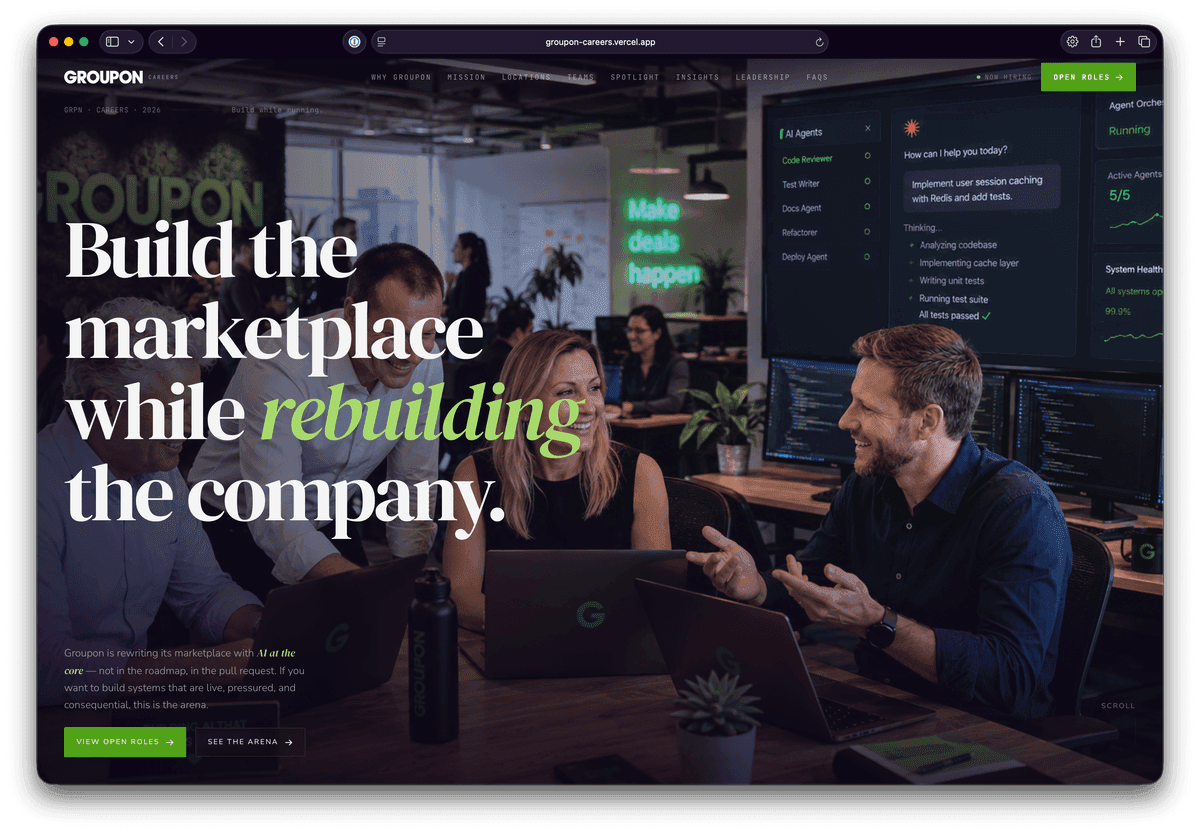

The preview lives at groupon-careers.vercel.app. Go look at it. The difference is not just visual. The old site was a declaration of conditions we wished were true. The new one is a declaration of conditions that actually exist: live marketplace pressure, legacy systems, AI workflows in production, real customer weight. It does not say join our journey. It says build while running.

The Old Site: Corporate Slop

Generic blue gradients. Stock photos of handshakes. Text like We are passionate about excellence and join our journey. It said nothing specific enough to be false. It attracted no one who wanted real problems. The site was not broken. It was worse than broken: it was invisible.

The New Site: The Arena

Black background. Amber and copper accents. Sections called The Arena, What AI-Native Means Here, Proof, Not Claims, Builder Profile. It names the exact conditions: legacy systems, real revenue pressure, six AI workflows in production, no clean room. It attracts builders who want leverage, agency, and hard problems. The site was built with Next.js 16, Tailwind 4, MDX content, and a dark factory CMS pipeline that generates spotlights, insights, and location pages from GitHub Issues while the team sleeps.

The build process itself was the experiment. I did not write the content files by hand. I opened GitHub Issues. cms-spotlight-add for employee profiles. cms-insight for leadership articles. cms-location for office pages. The agent read each issue, loaded the correct skills from .claude/skills/, generated headshots via fal-ai/gpt-image-2, wrote the MDX, ran pnpm lint, and opened a PR. I typed /publish on the issue. The PR merged. Vercel deployed.

By Monday morning, the preview site had eight employee spotlights, three insight articles, five location pages, and a full FAQ. None of the content was written by a human sitting at a keyboard. All of it was reviewed by a human before merge. That is the distinction that matters: the factory does not replace judgment. It compresses execution.

What I learned over that weekend became the pipeline this article describes. The BOT_PAT trap, the WAITING/READY signal protocol, the branch detection bug, the recursive comment filter, the media generation fallback chain — every trap in this article was discovered in real time while building the careers preview. The factory taught itself by failing, and I watched the logs.

A dark software factory is a repository where the control plane is not a dashboard or a scheduler. It is the native GitHub surface: Issues for intake, Pull Requests for implementation, Comments for dialogue, and Labels for state machine transitions. AI agents run inside GitHub Actions, reading the same interfaces humans use, producing the same artifacts humans produce, and following the same quality gates humans follow.

The term comes from manufacturing: a dark factory runs without lights because there are no humans on the floor. In software, it means the repository keeps shipping while the team sleeps, attends meetings, or works on harder problems. The humans write specs and design harnesses; the agents write code, generate images, and manage state.

This article is a deep teardown of a production CMS pipeline at Groupon that processes content requests through GitHub Issues. It covers the exact workflow DAGs, the AI configuration surface, the media generation pipeline, the issue type taxonomy, and the specific traps that will break your factory in production. Everything here is drawn from running systems and committed code.

Key Takeaways

- ✓

GITHUB_TOKENcannot firepull_requestevents on PRs it creates. You need aBOT_PATwithreposcope, or your CI gates and auto-merge will never trigger. - ✓

The

WAITING/READYsignal protocol lets an agent in a sandboxed action communicate state to the workflow orchestrator without file system access or side channels. - ✓

Clarification loops require careful actor filtering: exclude bot comments, exclude

/publishcommands, and never remove thecms-bot-waitinglabel before PR creation succeeds. - ✓

Quality gates must run on the agent's branch (

claude/issue-N-*), not the workflow's checkout ofmain, or you will silently validate the wrong code. - ✓

Media generation uses

fal-ai/flux/devfor insight headers andfal-ai/gpt-image-2for spotlight headshots, with monthly cap enforcement via.cms-fal-log.json. - ✓

The

allowed_toolspattern inanthropics/claude-code-actionis a security boundary, not a convenience list. It blocksgh pr create,rm -rf, and any unapproved command.

The Issue Taxonomy: Six Types, Six Skill Loadouts

How the factory classifies work before an agent ever sees it

The factory's job queue is not a free-form inbox. It is a typed taxonomy of six issue labels, each with a strict schema, required fields, and a specific set of skills the agent must load before acting. The label is the routing key. The schema is the contract.

When an issue is opened with a cms-* label, the cms.yml workflow fires. The first thing the agent does is read AGENTS.md and load the skills mapped to that label. This is not optional configuration. It is a mandatory skill-loading protocol that determines whether the agent generates a headshot, expands an article draft, or edits a location page.

| Label | Content Type | Required Fields | Primary Skills | Media Pipeline |

|---|---|---|---|---|

cms-spotlight-add | Employee profile | name, role, team, quote, photo, 3 Q&A pairs | content-engine, cms-headshot-gen, cms-brand-voice-check, ai-slop-cleaner | generate-headshot.ts → public/images/spotlight/{slug}.png |

cms-spotlight-edit | Profile update | Fields to change (partial update) | content-engine, cms-brand-voice-check (+ cms-headshot-gen if photo) | Headshot if new photo attached |

cms-insight | Leadership article | title, author, authorRole, body >= 100 words with factual anchors | cms-article-expand, cms-image-gen, cms-brand-voice-check, ai-slop-cleaner | generate-image.ts → public/images/insights/{slug}.png |

cms-faq | FAQ entry | question, answer (2–4 sentences, direct voice) | content-engine, cms-brand-voice-check | None |

cms-location | Office page | city, region, timezone, functions, intro, highlights | content-engine (download photo if provided) | download-attachment.ts only; no generation |

cms-content-edit | Copy or component change | Target file and desired change | frontend-design for components; cms-brand-voice-check for copy | None |

The taxonomy is rigid for a reason. The agent cannot invent a new issue template. It cannot generate a logo because cms-logo is not a label. It cannot write a component change without the frontend-design skill. The harness is the design: human-authored constraints that prevent the agent from wandering outside its lane.

For spotlights, the quote field has a specific quality gate: it must be specific enough to be false. A generic quote like I love working here fails the audit because it could apply to anyone. The agent must ask for a replacement via the clarification loop. For insights, the body must contain factual anchors — names, dates, tools, outcomes — or the cms-article-expand skill refuses to expand it.

The 11-Workflow DAG: Every Transition, Every Guard

The complete control flow from issue opened to PR merged, including auto-healing and code review

A dark factory is not one massive workflow. It is 11 small workflows that compose into a closed loop. Each workflow owns one state transition, reads one event type, and writes one artifact type. This decomposition is what makes the system debuggable when an agent hallucinates a file path or a label filter misfires.

The 11 workflows are: cms.yml (Issue Intake), issue-clarify.yml (Clarification Loop), cms-ci-healer.yml (CI Auto-Healer), pr-code-review.yml (Code Review + Auto-Fix), cms-publish.yml (Publish/Merge), vercel-preview.yml (Preview Notifications), cms-spend-alert.yml (Cost Monitoring), cms-stale-prs.yml (Stale PR Cleanup), cms-canary.yml (Skill Loading Test), ci.yml (Quality Gates), pr-checks.yml (Coverage Gate), and the implicit build job that Vercel runs on every push.

Workflow 1: cms.yml — Issue Intake and Agent Execution

The orchestrator that decides whether to implement or wait

cms.yml is the heart of the factory. It triggers on issues.opened and issues.reopened when the issue carries a cms-* label and does not carry cms-bot-waiting. The first gate is the kill switch: vars.CMS_BOT_ENABLED == 'true'. If the repository variable is anything other than 'true', the workflow exits immediately. This is how you halt the entire factory without touching code.

The workflow uses pnpm/action-setup@v4 with Node 20 caching, checks out the repo with fetch-depth: 0, installs the genmedia CLI for image generation, and and then invokes anthropics/claude-code-action@beta. The action is the agent container. Everything that follows depends on three configuration surfaces: model, allowed_tools, and direct_prompt.

cms.yml — Agent Configuration Surfaceuses: anthropics/claude-code-action@beta

with:

anthropic_api_key: ${{ secrets.ANTHROPIC_API_KEY }}

model: claude-sonnet-4-6

github_token: ${{ secrets.GITHUB_TOKEN }}

allowed_tools: >

Edit,MultiEdit,Glob,Grep,LS,Read,Write,

Bash(git add:*),Bash(git commit:*),Bash(git push:*),

Bash(git status:*),Bash(git diff:*),

Bash(pnpm exec tsx scripts/cms/*),

Bash(pnpm exec tsx scripts/cms/generate-image.ts:*),

Bash(pnpm exec tsx scripts/cms/generate-headshot.ts:*),

Bash(node scripts/cms/*),

Bash(pnpm lint:*),Bash(pnpm exec tsc:*)

direct_prompt: |

Read AGENTS.md. Identify the content type from the issue labels.

Load the matching skill(s) from .claude/skills/ per the skill-selection table.

If all required fields are present:

- Generate content, run brand-voice-check, commit.

- Output the word READY on its own line at the end.

If fields are missing:

- Post a comment listing what's needed.

- Output the word WAITING on its own line at the end.

- Stop. Do not commit anything.

DO NOT run gh pr create, gh issue edit, or any label/PR command.The model field pins the agent to claude-sonnet-4-6. This is not the latest model. It is the calibrated model. The factory was tested against this specific version, and changing it without re-calibrating the allowed_tools patterns and prompt instructions risks breaking the agent's behavior.

The allowed_tools field is the security boundary. It uses a comma-separated list where each Bash command is prefixed with Bash(...) and supports wildcard arguments. Bash(git add:*) allows git add content/spotlights/jana-novotna.mdx but Bash(git add:*) does not allow git add . && rm -rf .git because the action treats the entire string as a single command and rejects &&. This is critical: the action's Bash tool does not support command chaining, pipes, or semicolons. Every Bash call must be a single executable with literal arguments.

The allowed_tools list includes Bash(pnpm exec tsx scripts/cms/*) for cap-checking (cap-check.ts) and image postprocessing (postprocess-image.ts), and Bash(genmedia run:*) for media generation via the genmedia CLI. The direct_prompt is the control surface. It is not a suggestion. It is a script that the agent executes line by line. The prompt ends with an explicit signal instruction: READY or WAITING on its own line. The workflow reads this signal from the action's output JSON file.

cms.yml — Signal Extraction- name: Add cms-bot-waiting label if bot is waiting

run: |

RESULT=$(tail -1 /home/runner/work/_temp/claude-execution-output.json \

| python3 -c "import json,sys; d=json.load(sys.stdin); print(d.get('result',''))")

if echo "$RESULT" | grep -qi 'WAITING'; then

gh issue edit "${{ github.event.issue.number }}" --add-label "cms-bot-waiting"

fi

env:

GH_TOKEN: ${{ secrets.GITHUB_TOKEN }}Workflow 2: issue-clarify.yml — The Clarification Loop

How the factory handles incomplete specs without human intervention

The clarification loop is where most dark factory implementations break. A user opens an issue, the agent asks for a photo, the user uploads one in a comment, and now a second workflow must re-run the agent with the accumulated context. The trigger is issue_comment.created, but the filter is the hardest part of the entire system. The pipeline now also filters on github.event.actor != 'github-actions[bot]' as a second guard against recursive triggering.

You must exclude: bot comments (to prevent recursive self-triggering), /publish commands (handled by cms-publish.yml), /cancel commands, comments on pull requests (not issues), and issues that do not have both cms-bot-waiting and a cms-* label. The Groupon pipeline uses a join-based prefix match for label filtering because GitHub Actions' contains() on arrays does exact matching only. contains(join(labels.*.name, ' '), 'cms-') checks whether any label starts with cms-, which is the correct behavior for a prefix-based taxonomy.

issue-clarify.yml — Actor and Label Filterif: |

vars.CMS_BOT_ENABLED == 'true'

&& !github.event.issue.pull_request

&& github.event.comment.user.login != 'github-actions[bot]'

&& contains(join(github.event.issue.labels.*.name, ' '), 'cms-bot-waiting')

&& contains(join(github.event.issue.labels.*.name, ' '), 'cms-')

&& !startsWith(github.event.comment.body, '/publish')

&& !startsWith(github.event.comment.body, '/cancel')The clarification workflow has a pre-download step that runs before the agent. It extracts photo URLs from the comment thread using gh issue view --comments --json comments, downloads them with curl and GITHUB_TOKEN auth, and writes them to public/images/spotlight/{slug}.jpg. This solves two sandbox problems at once: the agent cannot access /tmp, and GitHub attachment URLs expire after a short time. By downloading images in a workflow step, the photos are available in the repo workspace when the agent starts.

A critical bug that appeared during calibration: removing the cms-bot-waiting label before PR creation. If the label is removed early and gh pr create fails, the issue no longer has the waiting label, so issue-clarify.yml will not re-trigger on the user's next reply. The issue is stranded. The fix is to remove the label only after successful PR creation, in the success path of the same step that creates the PR.

Workflow 3: cms-ci-healer.yml — The Auto-Healer

When CI fails, the factory heals itself

The CI Auto-Healer is the most recent addition to the factory. It triggers on workflow_run.completed when the conclusion is failure, watching CI and CMS — Process content request workflows. It downloads the run log archive via gh api repos/{repo}/actions/runs/{id}/logs, extracts the last 200 error lines, and invokes Claude Code on the exact failing SHA (not main).

The agent receives the error context and a diagnostic prompt. It creates a fix/ci-healer-{run_id}-{sha} branch, pushes it, applies the fix, and opens a PR. The pr-code-review.yml workflow explicitly skips fix/ci-healer-* branches to prevent the review loop from re-triggering on its own healing PRs.

cms-ci-healer.yml — Trigger and Log Fetchon:

workflow_run:

workflows:

- CI

- CMS — Process content request

types: [completed]

jobs:

heal:

if: github.event.workflow_run.conclusion == 'failure'

steps:

- uses: actions/checkout@v4.2.2

with:

ref: ${{ github.event.workflow_run.head_sha }}

- uses: pnpm/action-setup@v4

- uses: actions/setup-node@v4.4.0

with:

node-version: '20'

cache: 'pnpm'

- name: Fetch failing run logs

run: |

gh api "repos/${REPO}/actions/runs/${RUN_ID}/logs" > /tmp/logs.zip

unzip /tmp/logs.zip -d /tmp/run-logs

grep -h "error\|Error\|FAIL" /tmp/run-logs/*.txt | tail -200 > /tmp/errors.txt

- name: Run Claude Code to diagnose and fix

uses: anthropics/claude-code-action@beta

with:

anthropic_api_key: ${{ secrets.ANTHROPIC_API_KEY }}

model: claude-sonnet-4-6

allowed_tools: >-

Edit,MultiEdit,Glob,Grep,LS,Read,Write,

Bash(git add:*),Bash(git commit:*),Bash(git push:*),

Bash(pnpm lint:*),Bash(pnpm exec tsc:*)

direct_prompt: |

Analyze the CI failure logs at /tmp/errors.txt.

Identify the root cause. Create a fix on branch fix/ci-healer-{run_id}-{sha}.

Run pnpm lint and pnpm exec tsc --noEmit before committing.Workflow 4: pr-code-review.yml — Review and Auto-Fix

Every PR gets a Claude review before human eyes see it

The PR Code Review workflow runs on every pull request against main (opened, synchronize, reopened). It fetches the full diff with git diff base...head, pipes it to Claude Code for a structured code review, and then — if the review finds must_fix or should_fix issues — invokes a second pass to auto-fix them on the same branch.

The workflow has a critical guard: it skips branches starting with fix/ci-healer- or claude/. Without this, a bot-fix PR would trigger another review, which might trigger another fix, creating an infinite loop. The allowed_tools list is narrower than the CMS workflows: it excludes media generation and headshot scripts, focusing only on lint, typecheck, and test tools.

pr-code-review.yml — Guard and Diffon:

pull_request:

branches: [main]

types: [opened, synchronize, reopened]

jobs:

review-and-fix:

if: |

!startsWith(github.head_ref, 'fix/ci-healer-') &&

!startsWith(github.head_ref, 'claude/')

steps:

- uses: actions/checkout@v4.2.2

with:

fetch-depth: 0

ref: ${{ github.event.pull_request.head.sha }}

- uses: pnpm/action-setup@v4

- uses: actions/setup-node@v4.4.0

with:

node-version: '20'

cache: 'pnpm'

- name: Fetch diff for review

run: |

git diff "${BASE_SHA}...${HEAD_SHA}" > /tmp/pr.diff

echo "DIFF_SIZE=$(wc -c < /tmp/pr.diff)" >> "$GITHUB_ENV"

- name: Code review + auto-fix

uses: anthropics/claude-code-action@beta

with:

anthropic_api_key: ${{ secrets.ANTHROPIC_API_KEY }}

model: claude-sonnet-4-6

allowed_tools: >-

Edit,MultiEdit,Glob,Grep,LS,Read,Write,

Bash(git add:*),Bash(git commit:*),Bash(git push:*),

Bash(pnpm lint:*),Bash(pnpm exec tsc:*),Bash(pnpm test:*)

direct_prompt: |

Review the diff at /tmp/pr.diff.

Phase 1: /code-review — find must_fix and should_fix issues.

Phase 2: If issues found, /fix them on this branch.

Run pnpm lint and pnpm exec tsc --noEmit after fixes.Workflow 5: PR Creation — The BOT_PAT Problem

Why GITHUB_TOKEN is not enough, and how to work around it

Here is the single most expensive trap in dark factory engineering. When a GitHub Actions workflow creates a PR using the default GITHUB_TOKEN, that PR does not fire pull_request events. This means your ci.yml, your pr-checks.yml, your required status checks, and your auto-merge rules will never trigger. The PR sits open, green in the sense that no checks failed, but unmergeable because no checks ran.

The fix is a Personal Access Token with repo scope, stored as BOT_PAT. PRs created with BOT_PAT fire events normally because GitHub treats them as user-created, not action-created. The tradeoff is that you must rotate this token and scope it to the minimum repositories.

In the Groupon pipeline, BOT_PAT is used only for gh pr create. All subsequent operations — labeling, commenting, merging — use GITHUB_TOKEN so the comments are attributed to github-actions[bot] and do not re-trigger issue workflows.

Does not fire

pull_requesteventsCI workflows and status checks never trigger

Required checks show as

Expectedbut never runAuto-merge rules cannot evaluate

PR remains unmergeable indefinitely

Fires

pull_requestevents normallyCI workflows, status checks, and required checks trigger automatically

Branch protection rules evaluate correctly

Auto-merge rules can proceed on green CI

Human-like event semantics with machine execution

The PR creation step uses gh pr merge --squash --admin for same-day merges when the cms-approved label is applied. This bypasses the 24-hour branch protection delay for bot PRs that have passed all quality gates. The step also runs quality gates: pnpm lint and pnpm exec tsc --noEmit. These must run on the agent's branch, not on the workflow's checkout of main. A common latent bug is checking out main, then running lint against main while the agent's commits sit on an undetected branch. The fix is to git checkout the action branch after detecting it with git branch -r | grep origin/claude/issue-N-....

The PR body includes a cost report generated by scripts/cms/cost-report.ts. This script reads .cms-fal-log.json for fal.ai spend and estimates Anthropic cost from token counts or issue body length. Every automated PR carries its own price tag in the description.

Workflow 6: cms-publish.yml — Human Approval as a Label

How /publish becomes cms-approved becomes squash-merged

The publish workflow has two jobs that communicate through labels, not through shared state or message queues. Job 1 listens for /publish comments on issues, finds the associated bot PR by searching for Closes #N in PR bodies, verifies the PR has the bot-generated label, and adds cms-approved. Job 2 listens for pull_request.labeled events where the label is cms-approved and the PR has the bot-generated label, and immediately squash-merges with gh pr merge --squash --admin.

This label-based coordination is robust against failure. If the associated PR is not bot-generated (missing the bot-generated label), Job 1 replies with a warning and exits. If the user types /publish before the bot has opened a PR, Job 1 replies with a helpful message and exits cleanly. If gh pr create failed but the waiting label was already removed, the label-based trigger would not fire because the PR does not exist. The state machine degrades gracefully: the user replies again, the clarification loop re-triggers, and the system recovers.

cms-publish.yml — Merge Jobauto-merge:

if: |

github.event_name == 'pull_request'

&& github.event.action == 'labeled'

&& github.event.label.name == 'cms-approved'

&& contains(join(github.event.pull_request.labels.*.name, ' '), 'bot-generated')

steps:

- name: Merge PR (squash)

env:

GH_TOKEN: ${{ secrets.BOT_PAT }}

run: gh pr merge "${{ github.event.pull_request.number }}" --squash --adminWorkflow 7: Media Generation Pipeline

How fal.ai, flux, and gpt-image-2 generate assets inside a CI job

The factory does not just write code. It generates images. Two distinct pipelines handle media: insight article headers and spotlight headshots. Both use fal.ai, both enforce a monthly spend cap, and both exit with structured JSON that the workflow parses.

The insight header pipeline lives in scripts/cms/generate-image.ts. It uses fal-ai/flux/dev as the primary model and fal-ai/flux/schnell as the fallback. The prompt is appended with . No text, no logos. to prevent the model from rendering watermarks or typography. The output is resized to 1200×630 via sharp, EXIF-stripped, and written atomically to public/images/insights/{slug}.png.

The headshot pipeline lives in scripts/cms/generate-headshot.ts. It uses fal-ai/gpt-image-2 as the primary model (image-to-image conditioning) and fal-ai/flux-pro/kontext as the fallback. The input photo is base64-encoded and passed as image_url. The canonical STYLE_PROMPT is professional headshot, light neutral background, soft fill lighting, square crop 1:1, no text, photographic style. The output is 1024×1024, EXIF-stripped, and written to public/images/spotlight/{slug}.png.

generate-image.ts — Model Selection and Cap Enforcementconst PRIMARY_MODEL = 'fal-ai/flux/dev';

const FALLBACK_MODEL = 'fal-ai/flux/schnell';

export async function checkCap(currentSpendUsd: number, capUsd: number): Promise<void> {

if (currentSpendUsd >= capUsd * 0.9) {

throw new Error(

`Monthly fal.ai spend ($${currentSpendUsd.toFixed(2)}) is at or above 90% of the cap ($${capUsd}). Image generation paused.`

);

}

}

export async function generateInsightImage(slug: string, prompt: string): Promise<string> {

const cap = parseFloat(process.env.FAL_MONTHLY_CAP_USD ?? '50');

const currentSpend = readCurrentMonthSpend();

await checkCap(currentSpend, cap);

fal.config({ credentials: process.env.FAL_KEY });

const fullPrompt = `${prompt}. No text, no logos.`;

try {

const result = await fal.run(PRIMARY_MODEL, {

input: {

prompt: fullPrompt,

image_size: 'landscape_16_9',

num_inference_steps: 28,

guidance_scale: 3.5,

num_images: 1,

},

});

imageUrl = result.images[0].url;

} catch (primaryErr) {

const fallback = await fal.run(FALLBACK_MODEL, {

input: { prompt: fullPrompt, image_size: 'landscape_16_9', num_inference_steps: 4 },

});

imageUrl = fallback.images[0].url;

}

const processedBuffer = await sharp(imageBuffer).resize(1200, 630).png().toBuffer();

// atomic write: tmp → rename

logGeneration(0.09);

}Both pipelines share a cost logging mechanism: .cms-fal-log.json. It tracks requests and costUsd per month. The checkCap function throws when spend reaches 90% of FAL_MONTHLY_CAP_USD. This is not a soft warning. It is a hard stop that prevents the agent from burning the monthly budget on a runaway generation loop.

The download-attachment.ts script handles location photos and user-uploaded spotlight photos. It validates MIME type (image/*), enforces a 10 MB streaming byte cap, guards against path traversal in the slug, strips EXIF via sharp.rotate().png(), and writes atomically using a temp file and fs.renameSync. It also checks that the output path is not a symlink or special file before overwriting.

Workflow 8: Cost Governance

How the factory tracks and controls inference spend

Cost governance in a dark factory is not an afterthought. It is a first-class concern because the dominant cost is LLM inference, and runaway agents can burn through a daily budget before anyone notices. The Groupon pipeline has three cost control layers: per-PR cost reporting, daily spend alerts, and monthly media caps.

scripts/cms/cost-report.ts generates a markdown cost block for every PR. It reads .cms-fal-log.json for fal.ai spend and estimates Anthropic cost using Claude Opus pricing: $15/MTok input, $75/MTok output. If the action does not expose per-run token counts (which claude-code-action@beta does not as of the latest calibration), the script falls back to estimating from ISSUE_BODY_LENGTH: ~4 characters per token, plus a 2,000 token base for context, with output assumed at 30% of input.

scripts/cms/check-daily-spend.ts runs on a cron at 07:00 UTC (after stale PR cleanup at 06:00 UTC). It estimates yesterday's total spend from .cms-fal-log.json and .cms-token-log.json, compares it against DAILY_SPEND_THRESHOLD_USD (default 50), and opens a GitHub issue with label ops-cost-alert if the threshold is exceeded. The issue body includes a JSON breakdown and instructions to set CMS_BOT_ENABLED=false to pause the factory.

cost-report.ts — Pricing Constantsconst ANTHROPIC_INPUT_COST_PER_TOKEN = 15 / 1_000_000; // $15/MTok

const ANTHROPIC_OUTPUT_COST_PER_TOKEN = 75 / 1_000_000; // $75/MTok

const FAL_COST_PER_REQUEST = 0.09;

export function estimateCost(params: { inputTokens: number; outputTokens: number }): number {

return params.inputTokens * ANTHROPIC_INPUT_COST_PER_TOKEN +

params.outputTokens * ANTHROPIC_OUTPUT_COST_PER_TOKEN;

}

export function formatCostReport(data: CostData): string {

const total = data.anthropicCostUsd + data.falCostUsd;

return `### Cost\n\n- Anthropic: ~$${data.anthropicCostUsd.toFixed(2)}` +

` (${data.anthropicInputTokens.toLocaleString()} input, ${data.anthropicOutputTokens.toLocaleString()} output)\n` +

`- fal.ai: $${data.falCostUsd.toFixed(2)} (${data.falRequests} request${data.falRequests === 1 ? '' : 's'})\n` +

`- Total: $${total.toFixed(2)}`;

}Workflow 9: Production Traps

What breaks when you run this at scale, and the exact fix

allowed_tools patterns with no wildcards on dangerous commands. The action rejects &&, |, and ; in Bash calls.claude/issue-N-* but the workflow checks out main. Always detect the action branch with git branch -r and git checkout it before running quality gates..cms-fal-log.json for media and .cms-token-log.json for inference.github-actions[bot], claude-code-action[bot], and any other app actors explicitly. Use github.event.comment.user.login not github.event.actor.cms-stale-prs.yml uses actions/stale@v9 with only-pr-labels: bot-generated and days-before-pr-stale: 7. It closes the PR and re-opens the source issue.Intake, clarification, publish, preview, spend, stale, canary, CI, PR checks, CI healer, and code review

The Groupon CMS pipeline required 30+ iterative commits to stabilize the clarification loop and BOT_PAT behavior

Claude Code agent cost per content request, measured via cost-report.ts and appended to each PR body

fal.ai cost per request for gpt-image-2 or flux/dev, logged in .cms-fal-log.json; genmedia CLI abstracts provider switching

Dark Factory Production Readiness Checklist

What to verify before calling it lights-out

Production Readiness Checklist

BOT_PATsecret configured withreposcope and correct repository accessGITHUB_TOKEN-created PRs confirmed to NOT firepull_requestevents (tested)BOT_PAT-created PRs confirmed to fire CI workflows and required checksAgent

allowed_toolslist excludesgh pr create,gh issue edit, andrm -rfWAITING/READYsignal protocol tested with both missing and complete issuesClarification loop filters exclude bot comments,

/publish,/cancel, and PR commentscms-bot-waitinglabel is removed ONLY after successful PR creation, never beforeQuality gates run on the agent's branch, not the workflow's checkout of

mainCost report generated and appended to every bot PR body

Kill switch repository variable (

CMS_BOT_ENABLED) tested and documentedcms-stale-prs.ymlcloses unmerged bot PRs after 7 days and re-opens source issuescms-spend-alert.ymlwarns when daily agent costs exceed thresholdcms-ci-healer.ymltriggers onworkflow_run.completedwithconclusion=failure, checks out the failing SHA, and opens a fix PRpr-code-review.ymlskips bot branches (fix/ci-healer-*,claude/*) to prevent infinite review-fix loopsvercel-preview.ymlusesdeployment_statusevent and looks up the source issue by PR SHA, not by labelFORCE_JAVASCRIPT_ACTIONS_TO_NODE24is set in all workflow env blocks to force the action runtimegenerate-image.tsandgenerate-headshot.tsenforce 90% monthly cap via.cms-fal-log.jsondownload-attachment.tsvalidates MIME type, enforces 10MB cap, and strips EXIFvercel-preview.ymlposts preview URLs to source issues on deployment success

Can I use this without Claude Code?

Yes. The architecture is independent of the agent implementation. You could use OpenAI's Codex CLI, GitHub Copilot's agent mode, or a custom LLM client inside the action. The critical interfaces are the same: the agent reads a prompt, emits files, runs git commands, and signals READY or WAITING. What changes is the action wrapper and the allowed_tools pattern.

How do I prevent the agent from deleting production data?

Three layers: First, the allowed_tools pattern blocks dangerous Bash commands. Second, branch protection rules on main prevent direct pushes. Third, the agent never runs on main; it always commits to a feature branch. Even if the agent went rogue, it could only delete files on a branch that gets reviewed before merge.

What happens when the agent produces broken code?

The quality gates catch it before PR creation. If pnpm lint or pnpm exec tsc --noEmit fails, the PR creation step fails and the workflow posts an error comment on the issue. The user can reply with corrections, which triggers the clarification loop again. In practice, most agent errors are syntax-level and fixable with a one-sentence correction in the issue thread.

How much does this cost to run?

The dominant cost is LLM inference. A typical content request costs $0.02–$0.50 in Claude API tokens depending on complexity. Image generation via fal.ai adds $0.09 per request. GitHub Actions minutes are negligible for private repos (2000 minutes/month free). The cost-report.ts script tracks per-PR spend so you can set monthly caps via FAL_MONTHLY_CAP_USD and daily alerts via DAILY_SPEND_THRESHOLD_USD.

Can multiple agents run in parallel?

Yes, but they need isolation. The Groupon pipeline uses Docker sandboxing via the Claude Code action. For broader multi-agent patterns — where one agent implements, another reviews, and a third merges — see the Dark Factory CLI by Peter Stratton, which orchestrates three independent Claude Code instances with separate permissions.

What happens if fal.ai goes down?

Both generate-image.ts and generate-headshot.ts have fallback models. flux/dev falls back to flux/schnell. gpt-image-2 falls back to flux-pro/kontext. If both fail, the agent enters the clarification loop and asks the user to provide an image manually. The factory degrades gracefully rather than crashing.

What is the CI Auto-Healer?

cms-ci-healer.yml is a workflow that triggers when ci.yml or cms.yml fails. It checks out the exact failing SHA, downloads the run logs, and invokes Claude Code with a diagnostic prompt. The agent reads the error logs, identifies the root cause, opens a fix PR on a fix/ci-healer-{run_id}-{sha} branch, and posts a comment on the original issue. This closes the loop between failure and fix without human intervention.

How does the PR Code Review workflow work?

pr-code-review.yml runs on every PR against main. It fetches the full diff, invokes Claude Code for a two-phase review: first /code-review to find issues, then /fix to auto-fix them on the same branch. It skips branches opened by the auto-healer (fix/ci-healer-*) or the CMS bot (claude/*) to prevent infinite loops. The review comments are posted to the PR, and fixes are pushed as additional commits.

Why does the preview workflow use deploymentstatus instead of pullrequest?

vercel-preview.yml listens on deployment_status because Vercel previews are triggered by pushes, not PR events. The workflow receives the deployment SHA, looks up the associated PR via gh api /repos/{repo}/commits/{sha}/pulls, extracts the Closes #N reference from the PR body, and posts a state-specific comment on the source issue. This handles pending, in-progress, success, and failure states with different messages.

A dark software factory is not about removing humans from software development. It is about removing humans from the parts that do not need them: the translation from spec to branch, from branch to PR, from PR to merge, and from merge to deployed preview. The human still writes the spec, still approves the merge with /publish, and still designs the harness that constrains the agent via AGENTS.md and allowed_tools.

What changes is the latency. A content request that once sat in a backlog for two days now ships in twenty minutes. A bug report that once required finding an available engineer now generates a fix while the reporter is still writing the reproduction steps. The factory does not replace judgment; it compresses execution.

The 11 workflow files in this article are under 700 lines combined. The complexity is not in the code; it is in the edge cases. The BOT_PAT behavior, the recursive trigger prevention, the branch detection timing, the WAITING/READY signal protocol, and the media generation fallback chains are all learned from failed runs, not from documentation. Build the simple version first, run it for a week, and let the failures teach you what to guard against. The factory that cannot fail gracefully is not a factory. It is a bomb.

- [1]Dark Factory — Go CLI for autonomous agent orchestration (Peter Stratton, 2026)(github.com)↩

- [2]Claude Software Factory — 6 GitHub Actions workflows for self-running repos (Grey Newell, 2026)(github.com)↩

- [3]Dark Factory Documentation(godarkfactory.com)↩

- [4]Dark Factory GitHub Copilot CLI skill with sealed testing (DUBSOpenHub, 2026)(github.com)↩

- [5]Software Factory — FastAPI-based issue/PR autonomous development system (Sun Praise, 2026)(github.com)↩